GeneveOS Memory Management Functions

Memory management in MDOS is available via XOP 7. In the MDOS sources the relevant files are manage2s and manage2t.

Fast RAM is all memory with 0 wait states (SRAM), while Slow RAM is 1 wait state RAM, offered by the DRAM chips. The on-chip RAM is not considered here as it is not manageable by MDOS. The 256 bytes of on-chip RAM are always visible in the logical address area F000-F0FB and FFFC-FFFF. Any page that is mapped to the E000-FFFF area will be overwritten by operations in that area; this must be considered when using the E000-FFFF area.

Two classes of memory management functions are available: XOPs for user tasks, and XOPs exclusively used by the operating system.

Introduction and terminology

As it was common with TI application programming, buffers were usually placed statically in the program, that is, a portion of memory (like 256 bytes) was reserved in the program code to be used for storing data. Assembly language offers the BSS and BES directives for this purpose. This is not recommended when the buffer is very big, like 12 KiB, since that space will bloat the program code that must be loaded on application start. It is impossible for even larger buffer spaces like 100 KiB. In that cases, dynamic allocation is the typical solution also known from other systems.

The Geneve offers 512 KiB of slow RAM and another 32 KiB of fast RAM. Starting from MDOS 2.5, the fast RAM must be expanded to 64 KiB. Obviously, this memory can only be effectively used with the help of memory management functions.

Logical address space and execution pages

The logical address space are the addresses as seen from the CPU. The Geneve uses a 16-bit address bus which means that the logical address space is 64 KiB large. The CPU itself is not capable of addressing more memory, which is a limitation for application design. It essentially means that a transparent access to more than 64 KiB is impossible. The CPU is not capable of representing addresses outside this area.

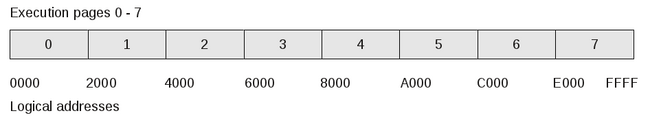

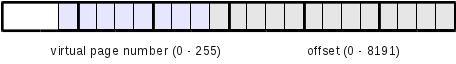

The connection between execution pages and the logical addresses can be seen here:

Execution pages are associated to logical addresses in a fixed way; the logical address allows to easily calculate the execution page: The first three bits determine the execution page. The remaining 13 bits are the 8 KiB memory space within this execution page.

Physical address space and physical pages

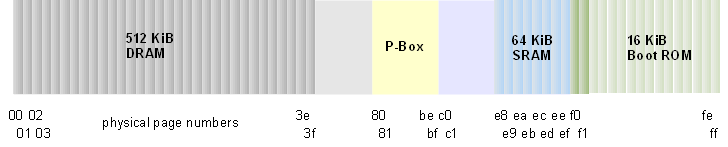

The physical address space comprises the complete memory area of the Geneve from the hardware viewpoint. There is no consideration of allocation to tasks. The physical address space consists of 256 pages of 8 KiB each, together 2 MiB.

The first 64 pages are the DRAM area (the slow RAM); from page number E8 to EF we find 8 pages of fast SRAM. The original configuration of the Geneve only included 32 KiB of SRAM, but in most cases this was expanded to 64 KiB. From page F0 to FF we find the Boot ROM; there is room for 128 KiB, but only 16 KiB are actually used, since the page address is not completely decoded in this area: All even pages are the same page, and all odd pages are the same page as well.

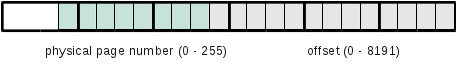

Physical addresses can be represented as shown here:

Accordingly, physical addresses range from 000000 to 1FFFFF. The TMS 9995, however, cannot use physical addresses since they do not match the 16 bit width of the address bus. The Geneve architecture includes a memory mapper which is used to map the logical address space of the CPU to the physical address space. So when the CPU accesses the logical address A080 the mapper determines which physical address this corresponds with. For instance, if the mapper contains the value 2C for execution page 5, the logical address A080 maps to physical address 058080.

Local (virtual) pages

MDOS allows for multitasking. In principle, multitasking is possible whenever we have means of passing control between independent applications. This can be done explicitly (by yielding) or timer-controlled. Apart from that, when several tasks are running in the system we have to take care that each task gets a part of the global memory area exclusively for itself. But how can we know which application uses which part of the physical memory?

Consequently, access to memory must be "virtualized"; we do not allow a direct access to the physical memory but map "local" or "virtual" addresses to a physical addresses. This indirection has an important advantage in multitasking systems: Every task may run as if it were the only one in the system. Although several tasks may access memory at the same local address, the actual physical memory is different.

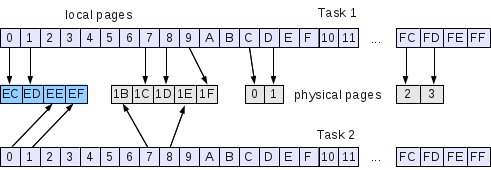

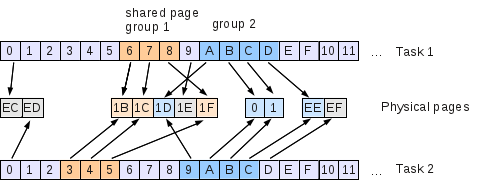

The illustration shows how the "local pages" of the task are mapped to physical pages. We see that a task may use up to 256 local pages, covering the complete physical space. This is an untypical, extreme case, though. A task should only make use of those pages it really needs. This means that many, in fact most of the local pages are unassigned.

In this example, another task has been loaded in the system, but although some of the local pages it uses are also used by the other task, they access different physical pages. Each task gets an own collection of pages from the pool of free physical pages.

When a task is done the allocated memory should be returned to the system; otherwise the pool of pages dries up, and no more tasks can be loaded and run (a so-called memory hole).

Now how do virtual addresses look like? - Just like physical addresses:

In fact the local page number just needs to be mapped to the physical page number if that local page has got such an associated physical page.

As said above, the CPU can only work with logical addresses, not with physical addresses. This is also true for virtual addresses. We can, however, write programs that need to care for the proper paging, like the XOPs presented here.

As you noticed we are using the terms local address/page and virtual address/page synonymously, but we would recommend to avoid the term virtual addressing and instead speak about local addresses. The reason is that virtual memory management has a distinctly different meaning in common computer architectures.

Virtual address spaces in other architectures

Virtual memory and its management in PC architectures refers to the mechanism by which a part of the logical address is interpreted as an index in a page table (or a cascaded page table). The page table contains pointers to physical memory and also to space on a mass storage, allowing for swapping pages in and out. The Geneve memory organization is similar to this concept as a pointer into a table is translated to a prefix into the physical address space.

However, and this is a notable difference, there is no support for virtual addresses in the TMS 9995. We may, of course, write applications that take care of memory mapping, but this is a pure software solution. The XOPs as shown below, and also other XOPs like those for file access, provide such a software implementation of virtual addressing.

PC architectures with the Intel 8086 had an address bus of 20 bits (1 MiB address space), the Intel 80286 had an address bus width of 24 bit which already allowed for 16 MiB address space. Modern PCs have at least a 32-bit wide address. A process may thus make use of a maximum of 232 bytes or 4 GiB. Here the address space is (or better was) larger than the amount of memory in the system. (Now you know why you might want to change to a 64-bit architecture.)

With our Geneve, however, the address space is smaller than the amount of available memory, and we neither have any kind of segment registers as in the 8086. This means that we will never have a similarly simple, expandable memory model as known from the PC world. Any kind of memory paging must be handled on the software level.

The concept as presented here as some similarities with the EMS technology on PCs which was dropped with the availability of the 80386 and newer processors featuring the protected mode that allowed programs to make use of the virtual memory management.

Memory pages can be shared among tasks. This is simply done by deliberately assigning the same physical page to different tasks. Since shared pages can be used by many tasks they cannot be allocated and freed in the same way as the usual "private" pages. Also, we want to be able to share more than a single page and still retain some relation between them. Therefore, shared page groups are introduced.

The illustration shows some important properties of the concept of shared pages:

- Shared pages can only be shared as a complete group. If you take one, you take all. This is reasonable since the task that offers pages to be shared should assume that all pages to be shared are actually used in the other tasks.

- Shared groups are always contiguous, they have no holes. Assumed that in the example, task 1 wants to share the pages 6-8 and A-D, it must declare two distinct groups 1 and 2.

- Groups are identified by a type. Unlike in the illustration, a type is not a color but a number from 1 to 254 (not 0 and not 255). Types may be freely defined, but it may be reasonable to find a convention on the numbers so that, for instance, tasks know by the number whether the shared group contains code or data.

- The creation of a group is done by one of the tasks declaring some of its private pages as shared, assigning a type. The other tasks may then import these pages.

- The importing task must have a gap in its local page allocation which is large enough to contain the group.

- A type may be reused when all tasks have released the shared group of this type.

- Local page 0 may never be member of a shared group since it contains essential information for the task.

User-task XOPs

User-task XOPs are available for use in application programs. Here is a typical example:

SEVEN DATA 7

...

GETMEM LI R0,1 // Allocate

LI R1,5 // 5 pages (=40 KiB)

LI R2,10 // Starting at local page 10

CLR R3 // Slow mem is OK

XOP @SEVEN,0 // Invoke XOP

...

Available memory pages

Opcode: 0

| Input | Output | |

|---|---|---|

| R0 | Opcode (0000) | Error code (always 0) |

| R1 | Number of free pages | |

| R2 | Number of fast free pages | |

| R3 | Total number of pages in system |

This function is used to query the free space in the system. No changes are applied. The total number of pages are the maximum number of allocatable pages.

Allocate pages

Opcode: 1

| Input | Output | |

|---|---|---|

| R0 | Opcode (0001) | Error code (0, 1, 7, 8) |

| R1 | Number of pages | Number of pages actually fetched to complete map as required |

| R2 | Local page number | Number of fast pages fetched |

| R3 | Speed flag |

Using this function a task can allocate pages from the pool of free pages. It must specify at which local page number the requested sequence of physical pages shall be installed.

Using the speed flag the task can control the preference for the memory type. If set (not 0), the system tries to first allocate fast memory, and when nothing is left, continues with slow memory. The opposite happens when the flag is 0.

Local pages are only once associated with physical pages until they are explicitly freed. If another allocation request overlaps with an earlier one, only new pages are allocated.

As shown here, first the pages 3-5 were allocated, returning 3 as the number of actually fetched pages. Then the pages 2-7 were allocated, but pages 3-5 are skipped, so again we get only 3 pages reported as allocated.

Free pages

Opcode: 2

| Input | Output | |

|---|---|---|

| R0 | Opcode (0002) | Error code (0, 2) |

| R1 | Number of pages | |

| R2 | Local page address |

Returns allocated pages to the pool of free pages. When the application terminates, all assigned pages are returned automatically, so this function is mainly used when the application explicitly wants to return memory during its runtime. Shared pages cannot be freed by this function.

Error code 2 is returned when the local page address (R2) is 0; page 0 cannot be freed in this way.

Map local page at execution page

Opcode: 3

| Input | Output | |

|---|---|---|

| R0 | Opcode (0003) | Error code (-, 2, 3) |

| R1 | Local page number | |

| R2 | Execution page number |

By this function, a local page is mapped into an execution page. While pure data operations (as done in some XOP functions) may be done using local (virtual) addresses, execution of code in these areas requires that the page be mapped into an execution page, which means it gets logical addresses. Logical addressing in the code usually requires that a particular execution page be used. For instance, if the code contains a subroutine that is expected at a certain address, it will fail to work when located elsewhere.

Error code 2 is returned when the local page number or the execution page number is 0.

Error code 3 is returned when the execution page number is higher than 7 or the local page is unassigned.

Get address map

Opcode: 4

| Input | Output | |

|---|---|---|

| R0 | Opcode (0004) | Error code (-, 8) |

| R1 | Pointer to buffer | Count of pages reported |

| R2 | Buffer size |

Gets the map table. On return of the call, the memory locations pointed to by R1 contain a sequence of physical page numbers that have been allocated. R1 contains the length of this sequence. The value at the pointer position is the physical page number associated with local page 0; next is the physical page number associated with local page 1 and so on. The table is trimmed, which means it stops with the last assigned page. Unassigned pages in between are indicated by a FF value.

Error code 8 is returned when the buffer is too small to hold the list of numbers of allocated pages.

Opcode: 5

| Input | Output | |

|---|---|---|

| R0 | Opcode (0005) | Error code |

| R1 | Number of pages to declare as shared | |

| R2 | Local page number | |

| R3 | Type to be assigned to shared pages |

Following the above description of shared memory, this function must be used to initially define a shared pages group. In the example, task 1 previously executed this function with R1=3, R2=6, R3=1 and once more with R1=4, R2=>0A, R3=2.

Opcode: 6

| Input | Output | |

|---|---|---|

| R0 | Opcode (0006) | Error code (0, 6, 8) |

| R1 | Type |

When a task does not need the shared memory anymore or wants to free its local pages, it calls this function to release the shared memory on its side. As we cannot release single pages, the group identifier (type) must be passed.

Error code 6 occurs when the given type is unknown (not declared before).

Opcode: 7

| Input | Output | |

|---|---|---|

| R0 | Opcode (0007) | Error code (0, 2, 6, 7, 8) |

| R1 | Type | |

| R2 | Local page number for start of shared area |

Imports a group of shared pages. In the example above, task 2 has executed this function with R1=1, R2=3 and a second time with R1=2, R2=9.

The error codes indicate that all worked well (0), that the task attempted to overlay local page 0, which is not allowed (2), that the group identifier is invalid or unknown (6), or that the task attempted to overlay an already assigned private page. Task 2 in the example would have run into this problem if it had tried to import group 2 starting at local page 0A, and local page 0D was already associated with physical page EF.

Opcode: 8

| Input | Output | |

|---|---|---|

| R0 | Opcode (0008) | Error code (0, 6) |

| R1 | Type | Number of pages in shared group |

By this function the task can deterine the size of the indicated shared group. This is important to know so that we can successfully import the group at a gap that is large enough.

Privileged XOPs (only available for operating system)

The following XOPs are used within MDOS itself to implement the memory management. They cannot be used from user tasks since they have good potential to spoil the entire management, requiring a system reboot. They may be of interest for people attempting to continue MDOS developnment.

Release task

Opcode: 9

| Input | Output | |

|---|---|---|

| R0 | Opcode (0009) | Error code (0, -1) |

| R1 | First node |

Undoes all memory allocations for this task. This function is called by the system when a task terminates. The first node is the pointer in MEMLST. An error code of -1 (FFFF) is returned when this function is called by a user task.

Get memory page

Opcode: 10

| Input | Output | |

|---|---|---|

| R0 | Opcode (000A) | Error code (0, 1, -1) |

| R1 | Physical page number | Pointer to node |

| R2 | Speed flag | (Page number from node) |

Gets the pointer to the node of a physical page. The node is used in the linked list of the page management. If R1 is from 0-FF, the result is the pointer to the node of the first free physical page. The speed flag is used to determine whether to find slow (0) or fast (not 0) memory. If R1 is logically higher than FF (usually with the high byte set to FF), the function will allocate a new page, depending on the speed flag.

R0 contains 0 if no error occured (note that the page is still not assigned to a task); 1 means that the page is not available or there are no free pages. -1 is returned when the caller is a user task.

Free memory page

Opcode: 11

| Input | Output | |

|---|---|---|

| R0 | Opcode (000B) | Error code (0, 8, -1) |

| R1 | Physical page number | Pointer to node |

The physical page is added to the list of free pages. Returns error code 8 if the page node could nbot be inserted in the list.

Free memory node

Opcode: 12

| Input | Output | |

|---|---|---|

| R0 | Opcode (000C) | Error code (0, -1) |

| R1 | Pointer to node |

Management function. The node is added to the list of free nodes.

Link memory node

Opcode: 13

| Input | Output | |

|---|---|---|

| R0 | Opcode (000D) | Error code (0, -1) |

| R1 | Pointer to node | |

| R2 | Pointer to node to link to |

Management function. Links memory nodes together to produce the linked list.

Get address map (system)

Opcode: 14

| Input | Output | |

|---|---|---|

| R0 | Opcode (000E) | -1 or count of valid pages |

Used by DSRs. Returns the mapping of local pages into location >1F00. R0 returns the length of the list which is also stored at 1FFE.

Error codes

| Code | Meaning |

|---|---|

| 00 | No error |

| 01 | Not enough free pages |

| 02 | Can't remap execution page zero |

| 03 | No page at local address |

| 04 | User area not large enough for list |

| 05 | Shared type already defined |

| 06 | Shared type doesn't exist |

| 07 | Can't overlay shared and private memory |

| 08 | Out of table space |